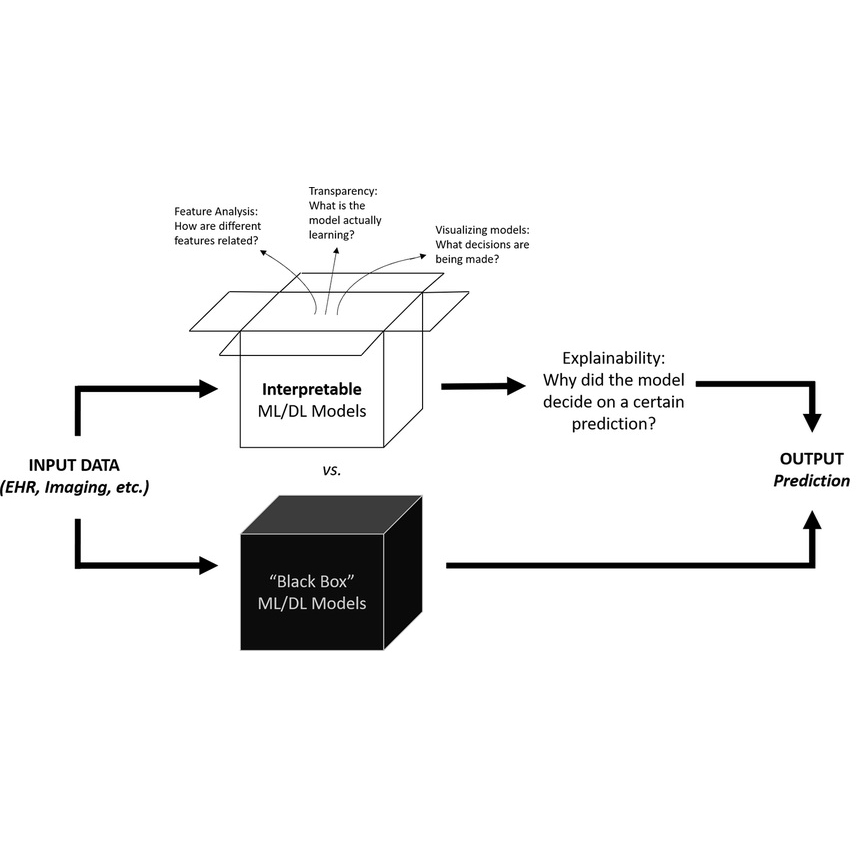

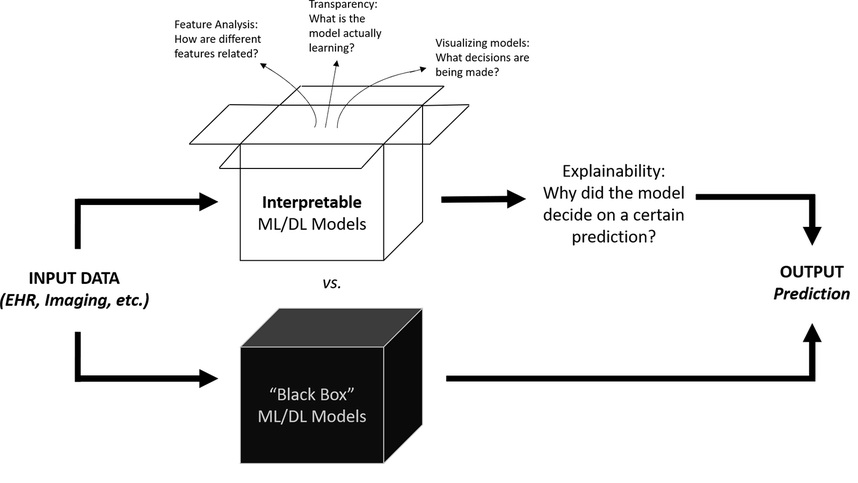

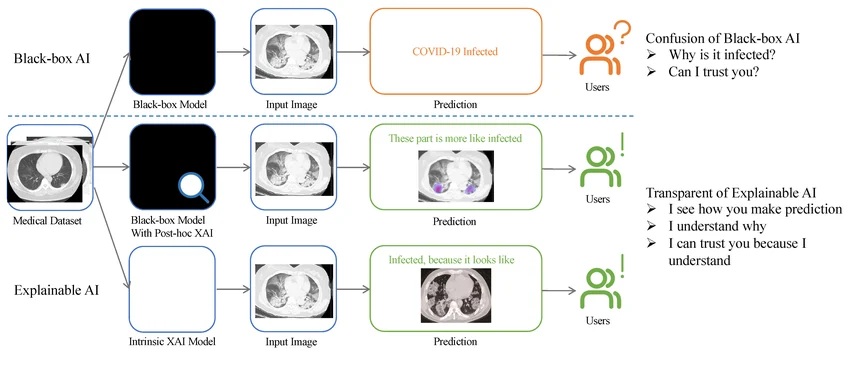

As cyberattacks become more frequent and complex, organizations are turning to Artificial Intelligence (AI) to defend their digital assets. Standard AI is incredibly fast at spotting patterns, but it often works like a “black box”—it might tell a security team, “This file is a virus,” or “there is a cyberattack going on from this IP addresss” without ever explaining why. For a security professional, a simple “Yes” or “No” isn’t enough. If the AI is wrong, it could block an important company document or block services that the company provides; if it’s right, the team still needs to know how the attacker got in to stop it from happening again. This is where Explainable AI (XAI) comes in.

What is Explainable AI (XAI)?

XAI is a set of tools and methods designed to make the “internal thought process” of an AI understandable to humans. In cybersecurity, XAI doesn’t just detect a threat; it provides a rational justification for its decision.

For monitoring assets and detecting attack instead of just monitoring for “bad” things, XAI helps security teams understand what “normal” looks like. If the AI flags a login attempt as suspicious, XAI can point to specific reasons: “The user is logging in from a new country” or “This account is suddenly accessing 2,000 files it never touched before.” XAI can generate maps or charts showing exactly where a network’s behavior deviated from the norm, helping humans spot the “smoking gun” quickly.

For suggesting mitigations XAI doesn’t just sound the alarm; it helps build the shield. By explaining the nature of the attack, it can suggest the best way to stop it.If the AI explains: “This is a Brute Force attack targeting the HR database,” the suggested action is clear: “Temporarily lock the targeted accounts and require a password reset.”

The Importance of the “User-in-the-Loop”

The most critical part of XAI is that it keeps a human—the User-in-the-Loop—at the center of the decision. Cybersecurity is high-stakes; a mistake could shut down a hospital’s network or a city’s power grid. XAI increases trust, facilitates collaboration and provides accountability.

- Trust and Validation: When an AI can explain itself, a human expert can quickly verify if the alert is a real threat or a “false positive” (a mistake).

- Collaboration: Humans bring “common sense” and context that AI lacks. For example, the AI might flag a large data transfer as an attack, but a human knows it’s just the annual company backup. XAI allows the human to see the AI’s logic, agree or disagree, and teach the system to be better next time.

- Accountability: If something goes wrong, XAI provides a clear “paper trail” showing why a certain decision was made, which is essential for legal and safety audits.

The main differences between standard AI and explainable AI (XAI) are the following. In terms of output standard AI could mention that “High Risk is detected” but explainable AI would say “High Risk: Unusual data flow to an unknown IP is detected.” The human role is highly elevated in XAI from blindly trust or ignore the human to review evidence and take informed action. In addition, the learning process becomes stronger because instead of AI algorithms learning alone the human can provide feedback to refine the AI algorithms.

XAI transforms AI from a mysterious oracle into a transparent partner, ensuring that while the computer does the “heavy lifting” of data analysis, the human stays in control of the final defense strategy.